Project Hyperrealism

Welcome to Project Hyperrealism, an exciting new experiment where we are pushing the boundaries of AI image generation. We aim to develop cutting-edge workflows and diffusion models capable of creating hyper-realistic images indistinguishable from photographs. By combining advanced techniques like latent diffusion models, LoRA, SafeTensor, super-resolution, and GANs, we aim to gain unprecedented control over image synthesis, enabling them to modify and edit generated content at will freely.

The possibilities are endless – travel back in time by altering objects and environments in a generated scene, create imaginary characters that look real enough to exist in the physical world, or touch up portraits like a digital makeup artist. The line between fiction and reality blurs as we refine our workflows using neural rendering, semantic manipulation, and style transfer techniques.

Business Applications of Hyperreal Images and Videos

Here are some potential business uses and applications of hyper-realistic AI-generated images:

- Advertising and marketing: Rapidly create product visuals, lifestyle scenes, and promotional content without extensive photoshoots. Tailor imagery to campaigns and target demographics.

- Concept design: Generate hyperreal product concepts, architectural renders, and interior visualizations without physical prototypes and modeling — faster iteration.

- Entertainment: Production studios could cut costs on CGI and post-processing to create realistic virtual scenes, characters, and objects for movies and games.

- Simulations: Architects and engineers can simulate lifelike building infrastructure and test different variations easily with AI-generated visuals.

- Fashion and retail: Clothing brands and online stores can automatically generate model photos or mix and match apparel without photoshoots.

- Social media: Influencers’ social media managers can instantly generate endless high-quality images for posts that capture audience attention.

- Automotive: Auto manufacturers can iterate vehicle prototype designs faster through photoreal visualizations rather than physical modeling.

- User-generated content: AR filters, virtual avatars, game skins and more can leverage lifelike image generation to create compelling user experiences.

- Training data: The AI models can artificially expand limited training datasets for other computer vision tasks by generating diverse, realistic images.

Workflows, Tools, and Techniques

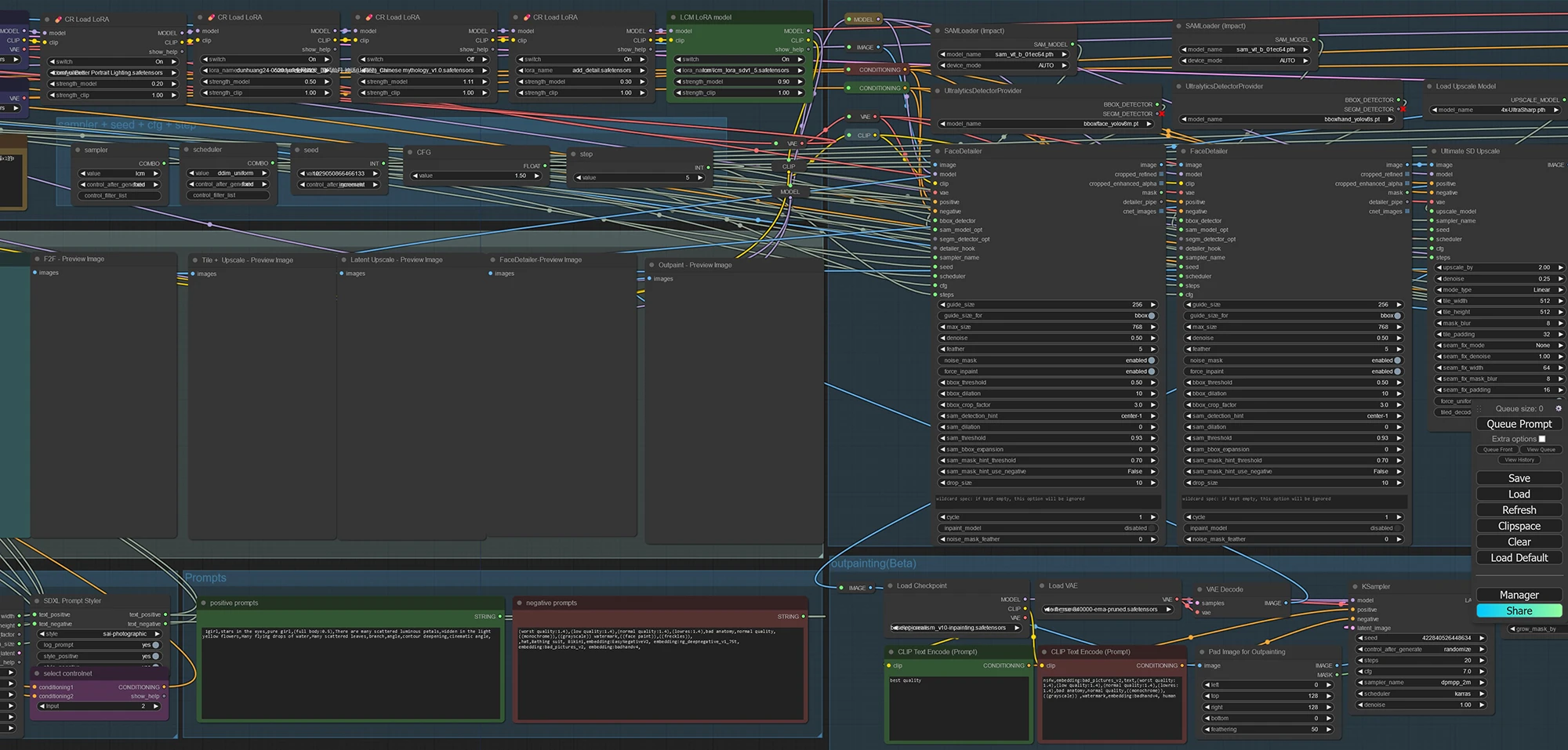

At Future Disruptor, we recently transitioned our entire AI diffusion workflow from AUTOMATIC1111 to ComfyUI to gain more advanced generative capabilities. By integrating ComfyUI into our pipeline, we have built more complex creative workflows that leverage leading-edge AI techniques for image generation.

The key advantage we have realized is the sheer breadth of diffusion models available in ComfyUI. Beyond Stable Diffusion, we now have access to Imagen, Realistic Voxel Diffusion, and Continual Diffusion – each with unique strengths. We train custom models optimized for our needs, like architecture visualization or futuristic scene generation.

ComfyUI’s LoRA implementation has proven invaluable, allowing us to quickly adapt models to new concepts without losing prior learnings. This maintains quality across vastly different prompts.

Centralizing diffusion model access, conditioning techniques, asset libraries, and post-processing tools into one platform has supercharged our AI capabilities. We can craft creative pipelines tailored to each project’s needs in a fraction of the time. It’s accelerated our output and workshops.

Here are the tools and techniques we are utilizing:

- Stable Diffusion: This state-of-the-art generative model produces amazing photorealistic details right out of the box. Its diffusion modeling generates crisp, coherent images that mirror real life.

- LoRA (Low-Rank Adaptation): Implementing LoRA allows to iteratively adapt diffusion models to new concepts while retaining prior learnings. This unlocks consistent photorealism for new prompts.

- Textual prompting: Carefully craft prompts describing scenes and attributes wanted. This guides Stable Diffusion to generate images matching intent.

- Semantic maps: Design semantic layouts mapping compositions, shapes, and objects. This structural scaffolding makes generated images cleanly composed.

- StyleGAN assets: Use the StyleGAN asset library, which contains photorealistic 3D objects, textures, and materials that can be seamlessly integrated into generations.

- Warp tool: This lets subtly refine and alter any part of a generated image. Use it to perfect proportions, positioning, and perspectives.

- Super-resolution: Upscale all generations 2-4x with super-resolution for ultra-crisp detail at the pixel level, like a DSLR camera.

- Post-processing: Apply lighting, color, and texture filters for cinematic quality. Depth-based sharpening adds realism.

- Gigapixel export: Export creations at multi-gigapixel sizes bigger than any camera. You can zoom endlessly into these giant images and see lifelike details.

- 3D scene generator: This allows the creation and rendering of fully 3D environments, letting you adjust lighting, materials, and positioning to achieve photorealism. The scenes look tangibly real.

- Spatial prompting: Use spatial prompts to pinpoint exactly where we want objects/characters placed in a 3D scene generation, gaining precision control.

- Realistic human models: State-of-the-art models to generate human portraits and figures that are indistinguishable from photographs. Integrate them into images for ultra-realism.

- Object eraser: This tool lets cleanly erase and remove any unwanted objects or artifacts from generations, giving total creative flexibility.

- Landscape generator: Use this to synthesize stunning outdoor environments, from lush forests to deserts to snowy mountains. It provides immense scenic variety.

- Gigapixel face enhancer: This upscales portraits to gigapixel resolution while preserving perfect facial proportions and features. The details look better than in real life.

Project Hyperrealism represents the next evolution in computational creativity and visual media. Join us as we step into a future of limitless generation powered by AI. Click here to access Paroxysm – AI Art Gallery. Stay tuned for progress on this project.

Update: Dive into the fascinating journey of creating Nouna, an AI character living in the year 2072. This case study unveils the blend of creativity and technology behind Nouna, shedding light on the future of AI characters in digital storytelling and applications.

This case study covers project objectives, tools and technologies used, story, persona chart, development process, challenges, solutions, applications, use cases, and future directions.

Creating Nouna: A Consistent AI Character Case Study

Subscribe to the Future Disruptor newsletter.

Leave a Reply