The Future of Artificial Neurons

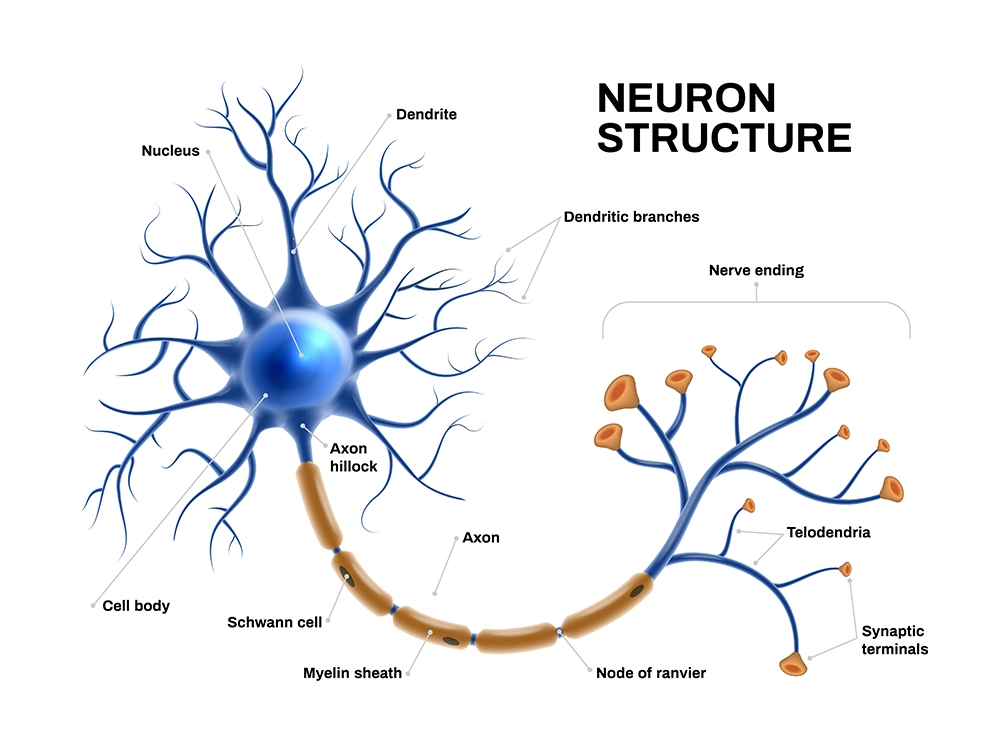

Artificial neurons are mathematical functions that mimic the behavior of biological neurons in a neural network. They are the basic units of computation in artificial neural networks, which are systems that can learn from data and perform tasks such as classification, regression, and generation.

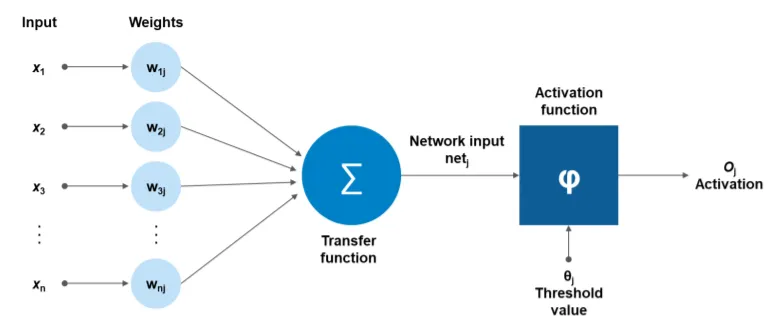

They can process one or more inputs, apply weights and biases, and produce an output through an activation function. The activation function determines how the artificial neuron responds to the input, and it can be linear, nonlinear, or even oscillating. They can be connected to other artificial neurons in different layers and architectures, forming complex networks that can learn from data and perform various tasks.

Practical Applications

Here are the practical applications of artificial neurons in real life:

- Medical diagnosis: Diagnose diseases and predict patient outcomes, such as cancer detection, heart disease prediction, and stroke risk assessment.

- Fraud detection: Detect fraudulent activity, such as credit card fraud and insurance fraud in banking, insurance, and retail.

- Natural Language Processing (NLP): Understand and process human language for machine translation, chatbots, and sentiment analysis.

- Image recognition: Identify objects in images, such as faces, cars, and animals, for facial recognition software, self-driving cars, and medical imaging.

- Speech recognition: Convert spoken language into text for voice assistants, dictation software, and speech-to-text translation.

- Recommendation systems: Recommend products, movies, music, and other items to users for e-commerce websites, streaming services, and social media platforms.

Importance for Our Future

Artificial neuron technology is important for our future because it can help us create powerful artificial intelligence systems to solve complex real-world problems, such as medical diagnosis, image processing, social media, and more.

It can also help us understand how the brain works and how we can enhance our cognitive abilities. It can transform various fields and industries, such as healthcare, education, entertainment, and security.

How Does It Work?

Step-by-step process of how artificial neurons work:

- The input layer receives the data from the outside world, such as images, text, or numbers. Each input node represents a feature or a variable of the data. For example, if the data is an image of a handwritten digit, each input node can represent a pixel value of the image.

- The input layer passes the data to the hidden layer, which consists of artificial neurons. Each artificial neuron has a weight and a bias associated with it, determining how much influence the input has on the output.

- The artificial neuron computes a weighted sum of the inputs, adds the bias, and applies an activation function to produce an output. The activation function is a nonlinear function that determines how the artificial neuron responds to the input. For example, a common activation function is the sigmoid function, which maps any input to a value between 0 and 1.

- The output of the artificial neuron is then passed to the next layer, which can be another hidden layer or the output layer. The output layer produces the final result of the neural network, such as a prediction, a classification, or a generation. The output layer can have one or more nodes depending on the task. For example, suppose the task is to classify the handwritten digit into one of 10 classes. In that case, the output layer can have ten nodes, each representing a probability of the digit being that class.

- The neural network can be trained to adjust the weights and biases of the artificial neurons based on the data and the desired output. This process is called learning or optimization, and it can be done using various algorithms, such as gradient descent, backpropagation, or genetic algorithms. The goal of the learning process is to minimize the error or the difference between the actual output and the desired output. A loss function can measure the error, quantifying how well the neural network performs on the data. For example, a common loss function is the mean squared error, which calculates the average of the squared differences between actual and desired output.

Evolve in the Future

Here are the ways artificial neurons can evolve in the future:

- They can become more biologically realistic and compatible, using organic or inorganic materials that communicate with natural cells and tissues. This could enable new applications in neuroscience, medicine, and bioengineering.

- They can incorporate more advanced learning algorithms and architectures, such as deep neural networks, recurrent neural networks, spiking neural networks, and neuromorphic computing. These could enhance the performance, efficiency, and adaptability of artificial neurons.

- They can expand their applications to various domains and challenges, such as natural language processing, computer vision, robotics, healthcare, security, and social good. These could increase the impact and value of artificial neurons for society.

Help Organizations/Enterprises

Artificial neurons can help organizations/enterprises in various ways, such as:

- Improving decision-making by recognizing hidden patterns and correlations in data, clustering and classifying it, and making generalizations and inferences.

- Enhancing performance and efficiency using advanced learning algorithms and architectures, such as deep neural networks, recurrent neural networks, spiking neural networks, and neuromorphic computing.

- Increasing impact and value by applying artificial neurons to various domains and challenges, such as natural language processing, computer vision, robotics, healthcare, security, and social good.

Driving Adoption

Factors that are driving artificial neuron adoption are:

- The availability of large-scale data sets, powerful computing resources, and improved algorithms enable the training and deployment of deep neural networks, a branch of machine learning that uses artificial neurons to achieve state-of-the-art results in many tasks.

- The advancement of neuroscience and bioengineering enables the creation and integration of artificial neurons with natural cells and tissues, opening new possibilities for brain-computer interfaces, prosthetics, and neuromorphic computing.

- The innovation and competition in the artificial intelligence market encourage the development and use of artificial neurons for various purposes, such as enhancing performance, efficiency, impact, and value.

Operational Challenges

These are the operational challenges affecting the expansion and adoption of artificial neuron technology:

- Requires training to operate, which can be time-consuming, resource-intensive, and prone to errors.

- It must be validated and verified to ensure accuracy, robustness, and generalizability.

- It has to be integrated and coordinated with other systems and components, such as data sources, user interfaces, and business processes.

- It has to be monitored and updated to cope with changing data, requirements, and environments.

- Have to be governed and regulated to comply with ethical and legal considerations, such as privacy, security, accountability, and fairness.

Success Stories

Some of the success stories of artificial neurons are:

- Scientists have built artificial neurons that fully mimic human brain cells, including the ability to translate chemical signals into electrical impulses and communicate with other human cells. These artificial neurons could one day be used to treat diseases such as Alzheimer’s, where neurons degenerate or die.

- Researchers have developed artificial neurons that can be implanted into the body to repair damaged nerve cells and restore lost functions, such as breathing, heartbeat, and movement. These artificial neurons are made of silicon and can communicate with biological neurons using electrical stimulation.

- Engineers have created a network of artificial neurons that can adapt and learn from their environment, using optical signals instead of electrical ones. This network can perform complex tasks like image recognition, pattern generation, and synchronization.

- Scientists have used artificial neurons to model and understand the behavior of natural neurons and their networks, such as the brain, the spinal cord, and the retina. This can help reveal neural computation, learning, and memory mechanisms and principles.

Types

Here are the different types of artificial neurons:

- Binary neuron: This simple artificial neuron produces a binary output (0 or 1) based on a threshold function. It is also known as a McCulloch–Pitts neuron or a linear threshold function.

- Continuous neuron: This is a type of artificial neuron that produces a continuous output (usually between 0 and 1) based on a nonlinear activation function, such as a sigmoid, ReLU, or tanh function. It is often used in the context of backpropagation.

- Probabilistic neuron: This artificial neuron produces a probabilistic output (usually between 0 and 1) based on a Parzen window or kernel density estimation. It is used to approximate the probability distribution function of each class in a classification problem.

Other artificial neural networks are composed of artificial neurons, such as feedforward, recurrent, convolutional, and spiking neural networks. Each type has its architecture, learning algorithm, and application.

Advantages

Here are the various advantages of artificial neurons:

- They can implement tasks that a linear program cannot, such as recognizing complex patterns, learning from data, and adapting to new situations.

- They can continue functioning even when some parts fail due to their parallel and distributed features.

- They do not require reprogramming, as they can learn and update their weights and biases based on the data and the desired output.

- They can handle non-linear relationships between inputs and outputs, which is common in real-world problems.

- They have circuit simplicity, intrinsic and unpredictable randomness, and reduced feature size compared to CMOS-based neurons.

Disadvantages

Here are the various disadvantages of artificial neurons:

- They require lots of computational power and data to train and operate.

- They are hard to explain and interpret, as they do not provide the logic behind their decisions.

- They are prone to overfitting, which means they can memorize the training data and perform poorly on new data.

- They need careful data preparation and optimization for production, which can be challenging and time-consuming.

- They lack clear guidelines for determining the best network structure and parameters, which often depend on trial and error.

- They can exhibit unpredictable behavior due to their non-linear and complex nature.

Ethical Concerns

These are the various ethical concerns associated with artificial neurons:

- There is potential for bias, discrimination, and harm to human and animal subjects, especially when transplanting human neurons into other species’ brains.

- Defining and measuring the moral status, consciousness, and intelligence of artificial neurons and their hybrids is challenging.

- The need for transparency, accountability, and explainability of the algorithms, data, and decisions of artificial neurons and their applications.

- The impact of artificial neurons on the environment, society, culture, and human identity.

- The responsibility and liability of artificial neurons’ creators, users, and regulators and their outcomes.

Governance and Regulation

Different approaches and proposals exist to govern and regulate artificial neurons, depending on the intervention’s level, scope, and purpose. Here are some of the ways:

- Developing a legally binding treaty for artificial neurons that requires signatories to respect human rights, democracy, and the rule of law. This is the approach taken by the Council of Europe, a human rights organization with 46 countries as its members.

- Adopting nonbinding principles or guidelines that lay out some values and goals should underpin artificial neurons’ development and use. The OECD, the EU, and other international and regional organizations take this approach.

- Establishing a specific agency or body that oversees and regulates artificial neurons in a certain domain or sector, such as health, education, or defense. This could involve setting standards, conducting audits, enforcing rules, and imposing sanctions.

- Creating a framework or mechanism allows for the participation and consultation of various stakeholders and experts, such as researchers, developers, users, regulators, and the public. This could foster trust, collaboration, and social benefit.

- Implementing a responsible AI initiative within organizations that deploy artificial neurons involves adopting principles and practices that ensure accountability, transparency, privacy, security, and fairness. This could also involve creating review boards, ethics committees, or ombudspersons.

History

The history of artificial neurons dates back to the early 20th century when researchers such as Warren McCulloch, Walter Pitts, Donald Hebb, and Frank Rosenblatt proposed mathematical models and devices that mimicked the behavior of biological neurons in a neural network.

Some of the milestones in the history of artificial neurons are:

- In 1943, McCulloch and Pitts created a model of the neuron that is still used today in an artificial neural network. This model is segmented into a summation of over-weighted inputs and an output function of the sum.

- In 1949, Donald Hebb published “The Organization of Behavior”, which illustrated a law for synaptic neuron learning. This law, later known as Hebbian Learning in honor of Donald Hebb, is one of artificial neural networks’ most straightforward learning rules.

- In 1958, Frank Rosenblatt created the Perceptron, an attempt to use neural network techniques for character recognition. The Perceptron was a linear system useful for solving problems where the input classes were linearly separable in the input space.

- In 1969, Marvin Minsky and Seymour Papert published the book “Perceptrons”, which criticized the limitations of the Perceptron and artificial neural network research. This book led to a period of stagnation in the field, known as the “AI winter”.

- In 1986, David Rumelhart, Geoffrey Hinton, and Ronald Williams rediscovered the backpropagation algorithm, a gradient descent algorithm used with artificial neural networks for reduction and curve-fitting. This algorithm enabled the training of multi-layer neural networks and sparked a revival of interest in the field.

- In the late 1980s and 1990s, various types and architectures of artificial neural networks were developed and applied to various domains and challenges, such as natural language processing, computer vision, robotics, and speech recognition.

- In the 2000s and 2010s, the availability of large-scale data sets, powerful computing resources, and improved algorithms led to deep learning, a branch of machine learning that uses deep neural networks to achieve state-of-the-art results in many tasks.

Tools and Services

Artificial neuron tools and services are software and hardware platforms that enable the creation, training, testing, deployment, and management of artificial neurons and neural networks. Here are some examples:

- Frameworks and libraries: These programming tools provide functions and classes to build and manipulate artificial neurons and neural networks. Some popular frameworks and libraries are TensorFlow, PyTorch, Keras, and Scikit-learn.

- APIs and cloud services: These web-based tools provide access to pre-trained or customizable artificial neurons and neural networks through a standard interface. Some popular APIs and cloud services are Google Cloud AI Platform, Amazon SageMaker, Microsoft Azure Machine Learning, and IBM Watson.

- Edge devices: These hardware devices can run artificial neurons and neural networks locally without relying on a cloud server. Some popular edge devices are NVIDIA Jetson, Google Coral, Intel Neural Compute Stick, and Raspberry Pi.

How to Get Started?

These are the ways you can get started with artificial neurons:

- Choose a programming language and a framework or library that supports artificial neurons and neural networks. Some popular choices are Python, JavaScript, TensorFlow, PyTorch, Keras, and Synaptic.js.

- Learn the basics of artificial neurons, such as their work, components, training, and applications. You can find many tutorials and courses online that explain these concepts.

- Practice using simple datasets and examples to build and train artificial neurons and neural networks. You can start with a single neuron that performs a binary classification task, such as predicting whether a student will pass or fail an exam based on their study hours and sleep hours.

- Experiment with different parameters, such as weights, biases, activation functions, learning rates, and number of epochs, and observe how they affect the performance and accuracy of your artificial neurons and neural networks. You can also try different types and architectures of neural networks, such as multi-layer perceptrons, convolutional neural networks, recurrent neural networks, and spiking neural networks.

- Apply your artificial neurons and neural networks to real-world problems and challenges, such as natural language processing, computer vision, robotics, healthcare, security, and social good. You can find many datasets and projects online that you can use as inspiration or reference.

Best Practices

Some of the best practices for getting the most from artificial neurons are:

- Choosing the right type and architecture of artificial neurons and neural networks for the problem, such as multi-layer perceptrons, convolutional neural networks, recurrent neural networks, and spiking neural networks.

- Preprocessing and normalizing the data to make it suitable for artificial neurons and neural networks, such as removing noise, outliers, and missing values, scaling and transforming the features, and balancing the classes.

- Selecting the appropriate parameters and hyperparameters for artificial neurons and neural networks, such as weights, biases, activation functions, learning rates, number of epochs, batch size, and regularization.

- Using effective optimization and learning algorithms for artificial neurons and neural networks, such as gradient descent, backpropagation, stochastic gradient descent, momentum, adaptive learning rates, and dropout.

- Evaluating and validating the performance and accuracy of artificial neurons and neural networks using suitable metrics and techniques, such as confusion matrix, accuracy, precision, recall, F1-score, ROC curve, AUC, cross-validation, and testing.

- Comparing and benchmarking the results of artificial neurons and neural networks with other methods and models, such as traditional machine learning algorithms, statistical methods, and human experts.

- Explaining and interpreting the outcomes and decisions of artificial neurons and neural networks using methods and tools, such as feature importance, saliency maps, attention mechanisms, and LIME.

- Ensuring the ethical and responsible use of artificial neurons and neural networks by addressing the potential risks and harms, such as bias, discrimination, privacy, security, accountability, transparency, and explainability.

Related Terms

Here are the various terms related to artificial neurons:

- Weights: These values determine how much influence each input has on the output of an artificial neuron. They can be represented as a matrix or a vector.

- Bias: This is a constant term that is added to the weighted sum of the inputs to shift the output of an artificial neuron. It can be positive or negative, loosely corresponding to a biological neuron’s threshold potential.

- Activation function: This nonlinear function determines how the artificial neuron responds to the input. It can have different shapes, such as sigmoid, ReLU, or tanh. It can also be monotonic, oscillating, or unbounded.

- Threshold: This value is compared to the net input of an artificial neuron to produce a binary output. It can be used to define the activation function as a step function.

- Learning rate: This parameter controls how fast an artificial neuron’s weights and biases are updated during the learning process. It can range from 0 to 1, affecting the learning algorithm’s convergence and stability.

- Target value: This is the desired output of an artificial neuron for a given input. It is used to measure the error or the difference between the actual output and the target value.

- Error: This measures an artificial neuron’s performance on a given input. A loss function, such as mean squared error or cross-entropy, calculates it. It is used to update the weights and biases of an artificial neuron during the learning process.

Avoiding Overfitting

Overfitting is a common problem when an artificial neural network learns too much from the training data and fails to generalize well to new data. There are several techniques to avoid overfitting in artificial neurons, such as:

- Simplifying the model: Reducing the number of layers, nodes, or parameters can prevent the model from being too complex and fitting the noise in the data.

- Early stopping: This method stops the training process when the validation error increases, indicating the model is overfitting the training data.

- Data augmentation: This is a technique of increasing the size and diversity of the training data by applying transformations, such as rotation, scaling, cropping, flipping, or adding noise. This can help the model learn more general features and reduce overfitting.

- Regularization: This is a method of adding a penalty term to the loss function that depends on the magnitude of the weights or biases of the model. This can prevent the model from having large weights or biases that can cause overfitting. Different types of regularization exist, such as L1, L2, or elastic net.

- Dropout: This is a technique of randomly dropping out some nodes or connections in the network during the training process. This can prevent the model from relying too much on specific nodes or connections and reduce overfitting.

Learn more

If you want to learn more about artificial neurons, here are some resources that you can check out:

Research: You can find many research papers and articles on artificial neurons online, covering their history, theory, design, implementation, and evaluation. Select few are:

- Artificial neurons developed to fight disease, a news article that reports on how scientists have built artificial neurons that fully mimic human brain cells and could be used to treat diseases such as Alzheimer’s.

- Artificial neurons compute faster than the human brain, a news article that describes how superconducting computing chips modeled after neurons can process information faster and more efficiently than the human brain.

- Artificial neurons mimic complex brain abilities for next-generation AI computing. This research paper presents a network of artificial neurons that can adapt and learn from their environment using optical signals instead of electrical ones.

Books: You can find many books on artificial neurons that provide comprehensive and in-depth knowledge of the subject, covering both the theoretical and practical aspects. Some examples are:

- Neural Networks and Deep Learning is a book that introduces artificial neural networks and deep learning methods, explaining how they work, their components, their training, and their applications.

- Fundamentals of Artificial Neural Networks and Deep Learning is a book that describes the fundamentals of artificial neural networks and deep learning methods, their inspiration, models, algorithms, architectures, and applications.

- Neural Networks: Easy Guide To Artificial Neural Networks is a book that provides a simple and easy guide to artificial neural networks, covering their basics, types, examples, and applications.

Courses: You can find many online courses on artificial neurons that offer interactive and hands-on learning experiences, covering the basics and advanced topics. Some examples are:

- Neural Networks and Deep Learning is a course that teaches you how to build and train your artificial neural networks and deep neural networks using Python and TensorFlow.

- Introduction to Neural Networks is a course that delves into the inner machinery of artificial neural networks and how they work using experiments and simulations.

- Learn Artificial Neural Network From Scratch in Python, a course that helps you learn how to create a neural network model in Python from scratch, using numpy and matplotlib.

I hope you enjoyed this insightful discourse. I believe that open dialogue and diverse perspectives are essential for fostering a deeper understanding of complex issues. Therefore, encourage you to share your thoughts and opinions in the comments below. Engage with other readers, challenge assumptions, and contribute to a rich and meaningful discussion. Your insights are valuable, and welcome you to participate actively in this intellectual exchange.

Together, we can explore new ideas, expand our horizons, and generate fresh perspectives.

Learner-in-Chief at Future Disruptor. A futurist, entrepreneur, and management consultant, who is passionate about learning, researching, experimenting, and building solutions through ideas and technologies that will shape our future.

Subscribe to the Future Disruptor newsletter.

Leave a Reply